Recently there has been a renewed discussion about inappropriate YouTube videos that have been injected into children’s content on the platform. This inappropriate material often contains lewd acts, violence, and other adult content.

There is an abundance of child-friendly and educational content on YouTube. There are videos that help kids learn their colors, numbers, alphabet, and more. Often these videos contain popular characters, such as Anna and Elsa from Frozen, Spiderman, Paw Patrol pups, Peppa Pig, and more.

Children recognize these beloved characters and click on the video. It may start off normally, but then suddenly the video takes a dark turn. These characters can go from singing and dancing to suddenly committing sex acts or acts of violence.

Why would someone inject foul content into children’s videos?

There is not a simple answer for why someone would want to compromise the children’s content on YouTube.

On one hand, there is the potential for a profit via ad revenue.

Children will watch a YouTube video over and over again. YouTube channels that display ads make a residual amount of money based on the number of views they generate.

It’s relatively easy to produce a mass amount of children’s video content. The quality standards are lower, the software needed is easily available, and there is no need for originality. Kids will repeatedly watch videos of the same nursery rhymes, even if the video content is similar or almost identical to the previous one.

Once they have enough children on their channel, and a large supply of videos at their disposal, the ad money comes rolling in.

On the other hand, there is an unfortunate subset of internet culture that simply delights in causing disruption. These people may want to mess with children’s YouTube content just for fun.

What is YouTube doing about it?

YouTube claims to have removed over 150,000 videos with disturbing children’s content as of November 2017. They have also demonetized over 3 million videos with questionable content.

They have pledged to ramp up their review process when videos are uploaded to the platform and they have also updated their policy on age-restricted videos, making them ineligible for monetization.

Although their response is encouraging, it does not change the fact that inappropriate content is still wildly available on the platform and will continue to be, because some of the content still falls safely within YouTube’s guidelines.

It’s important to remain proactive and vigilant when allowing your children to access YouTube.

What is the Momo Challenge?

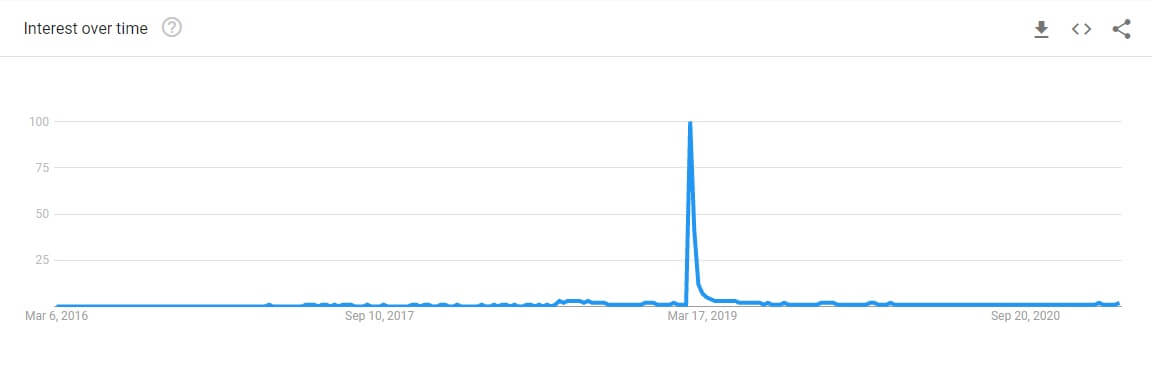

Talk of the Momo Challenge first appeared online around July 2018 at the same time that Youtuber ReignBot made a video about the “overnight urban legend”. Inexplicably, interest shot up around March 2019 when a picture of Momo began to go viral.

“Momo” is the name that’s been attributed to a creepy statue made by a Japanese special effects company. The actual name of the statue is “Mother Bird” and it was originally made and put on display at an art show in 2016.

Much like any other internet meme, it’s hard to explain how this picture began proliferating throughout the internet years after its inception.

Regardless of how it happened, the picture began gaining popularity on websites like Reddit, which is a hugely popular site where users share pictures, news articles, and other forms of media in an online forum setting.

As the picture of “Momo” made its way around the internet, a sinister story was somehow tacked on. The story changes depending on the website where the picture is shared, but in general, the message claims that those who stumble across the image are being encouraged to commit acts of violence or self-harm.

Don’t Be Fooled by the “Momo Challenge” Hoax

Because of the shock value of both the picture and the salacious story behind it, rumors began circulating that people – children, in particular – had actually harmed themselves after encountering Momo’s photo.

The rumors were given new life when the Police Service of North Ireland issued a warning about the “Momo Challenge” on Facebook.

To date, there is no actual evidence of anyone harming themselves because of the “Momo Challenge”.

However, because Momo is receiving such intense scrutiny and attention, it is quickly being adopted by people who are looking to capitalize on the viral opportunity, stoke fear and have a laugh at the expense of others.

What can you do to protect your children from inappropriate YouTube videos?

1. Use the YouTube Kids app on your tablets and mobile devices

You will need a Google account in order to set up this app. Although the app will automatically filter out age-restricted content, some of the disturbing videos that were created to bypass content filters can still seep through, so don’t stop here.

2. Turn off the search feature in the YouTube Kids app

When setting up the app for the first time, you have the opportunity to turn off the search function.

If your app is already in use and you want to disable search, click the lock icon in the bottom, right corner and then enter the parent passcode.

Click the Settings option, then click on the child profile you would like to edit. Enter the password for your Google account.

On the next screen, you will have the ability to turn off the search option.

3. Report and block inappropriate content from within the app

If you discover a video that you think should be removed from the YouTube Kids platform, you can click the three dots in the top, right-hand corner of the video and select either Report or Block.

You can block the video itself or the entire channel.

4. Do not allow your children to watch videos while unattended

It may seem like common sense, but the best way to know what content your children are exposed to is by watching it with them. Even if you are not actively engaged, at least keep your child close to you so that you can more quickly become aware if they come across an inappropriate video.